Total 226 Questions

Last Updated On : 24-Apr-2026

Preparing with Salesforce-Platform-Development-Lifecycle-and-Deployment-Architect practice test 2026 is essential to ensure success on the exam. It allows you to familiarize yourself with the Salesforce-Platform-Development-Lifecycle-and-Deployment-Architect exam questions format and identify your strengths and weaknesses. By practicing thoroughly, you can maximize your chances of passing the Salesforce certification 2026 exam on your first attempt. Surveys from different platforms and user-reported pass rates suggest Salesforce Certified Platform Development Lifecycle and Deployment Architect practice exam users are ~30-40% more likely to pass.

Since Universal Containers (UC) has adopted agile methodologies, the CEO is

requesting the development teams to deliver more and more work in shorter time frames.

The

CTO responds by saying the developers are not able to deliver the jobs they are

committing to.

What evidence can be gathered in an agile tool to support the CTO’s claims?

A. The definition of done (DoD)

B. A burndown chart showing team finishes early sprint after sprint

C. A Kanban board showing there’s always the maximum allowed amount of work in progress (WIP)

D. A burndown chart showing the team misses their forecast sprint after sprint

Explanation:

A burndown chart is a core agile artifact that tracks the completion of work throughout a sprint. It plots the remaining work against the time left in the sprint.

Evidence of a Problem: If a burndown chart consistently shows that the team is failing to complete the work they committed to (the "forecast") by the end of the sprint, it is direct, empirical evidence supporting the CTO's claim. The line on the chart would consistently end above the zero point, sprint after sprint.

Quantifiable Data: This isn't a subjective opinion; it's quantifiable data that demonstrates a systemic issue. It shows that there is a persistent gap between what the team plans (its capacity/velocity) and what it actually delivers. This data can be used to have a factual conversation with the CEO about realistic expectations and the need to address the underlying causes of the delivery problem.

Why the Other Options Are Incorrect:

A. The definition of done (DoD): The Definition of Done is an agreed-upon checklist of criteria that a user story must meet to be considered complete. While a weak or ambiguous DoD can cause delivery problems, the DoD itself is not evidence that the team is missing its forecasts. It is a process standard, not a performance metric.

B. A burndown chart showing team finishes early sprint after sprint: This is the opposite of the problem described. If the team is consistently finishing early, it would be evidence that they are exceeding their commitments, which would contradict the CTO's claim and support the CEO's push for more output.

C. A Kanban board showing there’s always the maximum allowed amount of work in progress (WIP): While this indicates a potential bottleneck in the workflow, it does not directly measure the team's ability to meet sprint forecasts. A full "In Progress" column shows that work is started but not finished, which is a symptom of poor flow or multitasking. However, it doesn't provide the historical, sprint-based evidence of missed commitments that the burndown chart does. It points to a process inefficiency, but not the specific outcome of consistently missing sprint goals.

Key References & Concepts:

Agile Metrics: The burndown chart is a primary metric for tracking sprint progress. Velocity (the average amount of work a team completes in a sprint) is derived from this historical data.

Sprint Commitment: In Scrum, a team forecasts the work it believes it can complete in a sprint. Consistently missing this forecast indicates a problem with planning, external interruptions, underestimated complexity, or technical debt.

Data-Driven Decisions: The role of an architect or leader is to move the conversation from subjective claims ("we can't do more") to objective data. A burndown chart provides the indisputable evidence needed to analyze the root cause and adjust plans or processes accordingly.

Due to several issues, Universal Containers wants to have better control over the changes made in the production org and to be able to track them. Which two options will streamline the process? (Choose 2 answers)

A. Make all code/configuration changes directly in the production org.

B. Allow no code/configuration changes directly in the production.org

C. Use the Force.com IDE to automate deployment to the production.org

D. Use Metadata API to automate deployment to the production.org

Explanation:

Why these two streamline control and tracking

✅ B. Allow no direct changes in production

If admins and developers cannot make changes directly in production, then:

All changes must go through sandboxes, version control, and a formal deployment process.

You automatically gain better control, traceability, and auditability:

- What was changed

- When it was changed

- Who deployed it

- From which source branch / sandbox

This is a core governance best practice in Salesforce.

✅ D. Use Metadata API to automate deployment

Using the Metadata API (via tools like Ant, Salesforce CLI, CI/CD pipelines, etc.) to deploy to production:

Enables scripted, repeatable deployments

Allows you to:

- Store deployment scripts in version control

- Track what was deployed and when

- Integrate with CI tools for logs and history

This directly supports better control and tracking of changes.

Why the others are not appropriate

❌ A. Make all code/configuration changes directly in the production org

This is the opposite of control and tracking. Changes are ad hoc, hard to trace, and risky.

❌ C. Use the Force.com IDE to automate deployment to the production.org

While Force.com IDE does use the Metadata API, the exam typically favors the general pattern (Metadata API–based automation, option D) over a specific legacy tool. Between C and D, D is the more correct and future-proof choice.

So, the two best options to improve control and traceability of production changes are:

✅ B and D

Cloud Kicks (CK) is launching a new sneaker line during the upcoming holiday season and needs to do a thorough batch data testing before Go-Live. CK is using Salesforce unlimited edition. What two sandbox types should the architect recommend for batch data testing? Choose 2 answers

A. Developer Pro sandbox

B. Partial Copy sandbox

C. Developer sandbox

D. Full sandbox

Explanation:

Batch data testing requires an environment with a significant volume of data that accurately represents the production org's data model and relationships. This is necessary to validate that business logic, workflows, reports, and data processing (like batch Apex) perform correctly under realistic conditions. The choice depends on the specific needs for data volume and the required refresh interval.

B. Partial Copy Sandbox ✅

This sandbox type includes a sample of your production data (up to 5GB of data, with 10,000 records per selected object). A predefined filter copies a subset of records, maintaining referential integrity. This is ideal for batch data testing as it provides a substantial, realistic dataset for testing performance and logic without the full overhead of a complete copy. It strikes a balance between data volume and refresh frequency (refreshes every 5 days).

D. Full Sandbox ✅

This is a complete replica of your production org, including all data and metadata. With the same storage limits as your production org, it is the definitive environment for performance testing, load testing, and final staging before go-live. It is perfectly suited for thorough batch data testing with 100% of the data. However, its refresh interval is much longer (every 29 days), so it is used for major testing milestones.

Why Other Options Are Incorrect ❌

A. Developer Pro Sandbox and C. Developer Sandbox

→ These sandbox types are designed for development and coding tasks, not for data-intensive testing.

→ They include all metadata but only include a minimal amount of production data (typically just standard objects and a few custom object records needed for development).

→ Their data storage capacity is too small (Developer Pro: 1GB, Developer: 200MB) to support any meaningful batch data testing.

→ Using them for this purpose would result in tests that fail to uncover data-related issues, performance bottlenecks, or errors in batch processing logic that only appear with large data volumes.

References 📖

Salesforce Help: Sandbox Types and Storage Limits

Salesforce Help: Get Started with Sandboxes

Universal Containers is a global organization that maintains regional production instances of Salesforce. One region has created a new custom object to track Shipping Containers. The CIO has requested that this new object be used globally by all Salesforce instances and further maintained and modified regionally by local administrators. Which two deployment tools will support this request? (Choose 2 answers)

A. Tooling API

B. Force.com IDE

C. Change sets

D. Force.com Migration Tool

Explanation:

The key detail in the scenario:

“Universal Containers is a global organization that maintains regional production instances of Salesforce.”

That means we’re dealing with multiple, unrelated Salesforce orgs (not sandboxes of the same org). To share a custom object (and maintain it over time) across those orgs, we need deployment tools that can move metadata between unrelated orgs.

✅ B. Force.com IDE

Historically (and in exam context), the Force.com IDE:

- Connects to any Salesforce org via credentials.

- Can retrieve and deploy metadata (including custom objects) between separate orgs.

- Supports ongoing maintenance by pulling down changes, editing, and redeploying.

So it can be used to export the Shipping Container object from the regional org and deploy it into other regional orgs.

✅ D. Force.com Migration Tool

The Force.com Migration Tool (Ant-based) is a scriptable deployment tool that uses the Metadata API:

- Can retrieve the custom object from one org (source).

- Can deploy it to any other org (target), even if they aren’t related (no sandbox/prod relationship needed).

- Great for repeatable, automated deployments across many regional instances.

- Perfect match for “used globally by all Salesforce instances” and then updated regionally as needed.

Why the others are not correct

❌ A. Tooling API

The Tooling API is more for:

- Working with smaller metadata units (e.g., Apex classes, triggers, some setup entities).

- Supporting developer tools and IDEs.

It’s not intended as a primary cross-org deployment mechanism for metadata like a full custom object model across multiple orgs.

❌ C. Change sets

Change sets:

- Only work between related orgs (e.g., sandbox → production, or production → another prod in a multi-org but same org relationship).

- You cannot send a change set between independent, unrelated Salesforce orgs.

Here, the regional production instances are separate orgs, so change sets won’t satisfy the global deployment requirement.

Exam takeaway

When you see “multiple independent production instances” and the need to share metadata globally, think:

❌ Not Change Sets

✅ Use Metadata API–based tools → Force.com IDE and Force.com Migration Tool

So the correct choices are:

✅ B. Force.com IDE

✅ D. Force.com Migration Tool

Universal Containers has just initiated a project involving a large distributed development and testing team. The development team members need access to a tool to manage requirements and the testing team needs access to a tool to manage defects. Additionally, stakeholders are requesting ad -hoc status reports. What tool should an Architect recommend to support the project?

A. Spreadsheets

B. Code Repository

C. Wave

D. Port management tool

Explanation:

Universal Containers has a large distributed team working on a new project. They need tools to manage requirements, track defects, and generate ad-hoc status reports for stakeholders. This is exactly what a project management tool is designed to handle.

D. Project management tool ✅

A project management tool (such as Jira, Rally, or Azure DevOps) allows teams to document and track business requirements, user stories, and test cases. It also provides defect tracking features so the testing team can log, assign, and resolve issues. In addition, these tools generate real-time dashboards and reports that stakeholders can access at any time. This helps everyone stay aligned and informed, even in a large, distributed team.

Why Other Options Are Incorrect ❌

A. Spreadsheets 🚫 While spreadsheets can store lists and basic data, they are not designed for collaboration, workflow tracking, or automated reporting. They become difficult to manage in large teams and do not scale well for real projects.

B. Code Repository 🚫 A code repository (like GitHub or Bitbucket) is used to store and manage source code. It does not manage requirements, test cases, or defects, and it is not a reporting tool for stakeholders.

C. Wave 🚫 Wave (now called Tableau CRM) is an analytics tool for business data. It is not designed for requirement tracking or defect management. It can create reports and dashboards, but it does not provide the full project management features needed by development and testing teams.

References 📖

Salesforce Architect Guide: Selecting Tools for Distributed Development

Atlassian Jira documentation – Managing Requirements and Defects

Salesforce Help: Best Practices for Agile Project Management

Universal Containers (UC) has multiple development teams that work on separate streams of work, with different timelines. Each stream has different releases of code and config, and the delivery dates differ between them. What is a suitable branching policy to recommend?

A. Leaf-based development

B. Trunk-based development

C. GitHub flow

D. Scratch-org-based development

Explanation:

Universal Containers has multiple development teams working on separate streams of work with different timelines and release schedules. This means they need a branching policy that supports parallel development, allowing each team to work independently without interfering with others. Let’s explore why leaf-based development is the best choice:

A. Leaf-based development ✅

Leaf-based development (also known as feature branching) allows each team to work on their own branch, called a "leaf," for their specific stream of work. These branches are created from a main branch (like "main" or "develop") and are used for developing features or configurations. Each team can work on their own timeline, test their changes, and merge their branch back to the main branch when ready. This approach supports UC’s need for separate streams with different release dates, as it keeps each team’s work isolated until it’s time to integrate.

Why Other Options Are Incorrect ❌

B. Trunk-based development:

In trunk-based development, all developers work directly on a single branch (the "trunk"). This is great for fast, continuous integration but can cause conflicts when multiple teams work on different timelines, as UC does. It’s not ideal for managing separate streams with distinct release schedules.

C. GitHub flow:

GitHub flow is a simplified branching model where developers create feature branches and merge them into the main branch via pull requests. While similar to leaf-based development, it’s designed for simpler workflows and may not scale well for UC’s complex setup with multiple teams and release schedules. Leaf-based development is more flexible for enterprise scenarios like UC’s.

D. Scratch-org-based development:

Scratch orgs are temporary Salesforce environments used for development and testing in Salesforce DX. This is not a branching policy but a tool for development. It doesn’t address how code and configurations are managed across branches or teams.

References 📖

Salesforce Help: Salesforce DX Developer Guide - Branching Strategies

Trailhead: Adopt a Branching Strategy for Salesforce Development

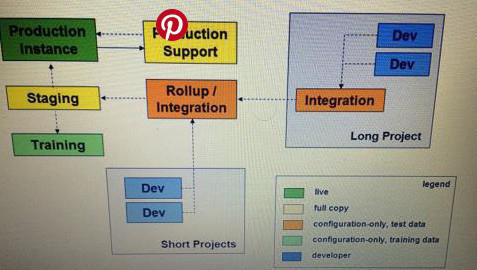

Which statement is true for the Staging sandbox in the following diagram?

A. When created or refreshed, the Staging sandbox is a full replica of Production

B. The Staging sandbox is automatically refreshed on a schedule set by the administrator

C. Salesforce major releases (e.g., Winter to Spring) always occur in Staging and Production at the same time

D. The Staging environment can only be updated once every two weeks

Explanation:

The diagram uses standard Salesforce sandbox icons and labels. The icon for the "Staging" sandbox is the same as the "Production" icon but in a different color, and it is labeled "full copy." This is the key identifier.

Let's analyze each option:

A. When created or refreshed, the Staging sandbox is a full replica of Production:

(Correct) In Salesforce, a "Full Copy" sandbox is intended as a full replica of the production org, including all data and metadata. The diagram explicitly labels the Staging sandbox as "full copy," confirming this is its type. Therefore, every time it is created or refreshed, it will be a complete copy of Production at that point in time.

B. The Staging sandbox is automatically refreshed on a schedule set by the administrator:

(Incorrect) While an administrator can schedule a refresh, Full Copy sandboxes cannot be refreshed more than once every 29 days. The diagram does not indicate anything about an automatic refresh schedule. This is a configurable option, not an inherent truth of the Staging sandbox.

C. Salesforce major releases (e.g., Winter to Spring) always occur in Staging and Production at the same time:

(Incorrect) This is false. Salesforce has a sandbox preview period. Staging (and other sandboxes) are upgraded to a new major release before the production org is upgraded. This allows customers to test their applications against the new release. Production is upgraded on a separate, later date.

D. The Staging environment can only be updated once every two weeks:

(Incorrect) This statement is ambiguous but incorrect. If "updated" refers to refreshing the sandbox from production, the limit is once every 29 days for a Full Copy sandbox, not two weeks. If "updated" refers to deploying changes to it, there is no platform-enforced limit; deployments can happen as often as the development lifecycle allows.

Reference:

Salesforce Help: "Sandbox Types" - The documentation for "Full Sandbox" states: "A Full sandbox is intended for use as a testing environment. It includes all of your production organization's data and metadata." The diagram uses the standard icon and label for this sandbox type.

A Salesforce Administrator has initiated a deployment using a change set. the deployment has taken more time than usual. What is the potential reason for this?

A. The change set includes changes to permission sets and profiles.

B. The change set includes Field type changes for some objects.

C. The change set includes new custom objects and custom fields.

D. The change set performance is independent of the included components.

Explanation:

The performance of a Salesforce deployment, particularly with change sets, is not uniform and depends heavily on the type of metadata included. Some changes are more complex and require more processing time on the Salesforce platform, leading to longer deployment times.

Why Field Type Changes are a Potential Reason ✅

A field type change (e.g., changing a field from Text to a Picklist) is a data-intensive and potentially destructive operation. Salesforce must perform a series of complex actions to ensure data integrity during this process:

1. Validation and Conversion: Salesforce must validate all existing data in the field to see if it can be successfully converted to the new field type. If the data is incompatible, the deployment will fail.

2. Background Processing: Unlike other metadata changes, a field type change can trigger extensive background processing to update every record in the target org that contains data in that field. This is a resource-intensive operation that can take a significant amount of time, especially in orgs with a large volume of data.

3. Rollback Mechanism: Because of the potential for data loss, the entire transaction is designed to be atomic. If any part of the field type conversion fails, the entire deployment is rolled back, which also adds to the total processing time.

Why Other Options Are Incorrect ❌

A. The change set includes changes to permission sets and profiles: While permission sets and profiles are essential for access control, changes to them are generally lightweight metadata operations. They don't require the same level of data processing as a field type change and therefore do not typically cause significant deployment delays on their own.

C. The change set includes new custom objects and custom fields: Creating new objects and fields is a standard metadata creation process. While the deployment will include all the associated components (e.g., page layouts, list views), it does not involve the complex data validation and conversion of existing records that a field type change does. This is a much faster operation.

D. The change set performance is independent of the included components: This statement is incorrect. As demonstrated above, the type of components included in a change set is the primary determinant of its deployment time. Deployments with complex components like field type changes, large Apex classes with extensive test coverage, or components with a high number of dependencies will take much longer than a simple deployment of a new custom field.

Universal Containers (UC) had implemented two full sandboxes. One, known as Stage, is used for performance, regression testing, and production readiness check. The other is used primarily for user acceptance testing (UAT). Both full sandboxes were refreshed two months ago. Currently, UC is targeting to start user acceptance testing in two weeks, and do production release in four weeks. An admin also realized Salesforce will have a major release in six weeks. UC needs to release on the current Salesforce version, but also wants to make sure the new Salesforce release does not break anything. What should an architect recommend?

A. Refresh Stage now, and do not refresh UAT. This way, Stage will be on preview and UAT will not.

B. Use the Sandbox Preview Guide to check if there is any necessary action needed. UC might have to prepare, refresh, and redeploy to UAT.

C. Visit trust.salesforce.com to figure out the preview cutoff dates, if the dates had passed, work with support to get on the preview instance.

D. Refresh Stage from UAT now. After preview cutoff, use the upgraded one for regression test, use the non-upgraded one for user acceptance Test.

Explanation:

Salesforce has three major releases each year, and sandboxes can either stay on the current version (non-preview) or move to the new version (preview), depending on whether they are refreshed before the preview cutoff date.

Universal Containers needs to:

1. Release on the current Salesforce version (so UAT testing should stay on the current release).

2. Validate against the upcoming release to make sure nothing breaks (so one sandbox, like Stage, should be on preview).

The official process for managing this situation is to consult the Salesforce Sandbox Preview Guide. This guide tells you which sandboxes will be upgraded, the cutoff dates, and the steps needed (refreshing, redeploying) to align sandboxes with either the preview or the current version.

By following the guide, UC can ensure that one sandbox (Stage) is refreshed to move onto the preview release for regression testing, while the UAT sandbox stays on the current release for user acceptance testing.

Why Other Options Are Incorrect ❌

A. Refresh Stage now, and do not refresh UAT 🚫 This may or may not achieve the desired result, depending on the preview cutoff schedule. The Sandbox Preview Guide is required to confirm, so this option is incomplete.

C. Visit trust.salesforce.com 🚫 Trust provides release dates, status, and availability, but it does not give sandbox preview cutoff instructions or steps for aligning sandboxes. The Sandbox Preview Guide is the right resource.

D. Refresh Stage from UAT now 🚫 You cannot refresh one sandbox from another sandbox; refreshes only come from Production. This option is technically incorrect.

References 📖

Salesforce Sandbox Preview Guide

Salesforce Trust – for release schedules (supplementary, not the main solution here).

✨ Exam Tip: Always use the Sandbox Preview Guide to plan testing across different Salesforce release versions — it’s the architect’s go-to reference.

Universal Containers (UC) is preparing for the new Salesforce release in a couple of months, and has several ongoing development projects that may be affected. Which three steps should the team at UC take to prepare for this release? Choose 3 answers

A. Contact Salesforce to schedule a time to upgrade the full Sandbox.

B. Refresh a Sandbox during the Release Preview Window to ensure they have the upcoming release.

C. Run regression tests in an upgraded sandbox to detect any issues with the Upgrade.

D. Review the release notes for automatically-enabled features and technical debt.

E. Upgrade any SOAP integrations to the newest WSDL as early as possible

Explanation:

✅ B. Refresh a Sandbox during the Release Preview Window to ensure they have the upcoming release.

During the Release Preview Window, Salesforce lets you:

Refresh certain sandboxes so they’re upgraded to the next release early.

Use these preview sandboxes to test your in-flight changes and integrations before production is upgraded.

This is a key step to see how the new release will affect your org and projects.

✅ C. Run regression tests in an upgraded sandbox to detect any issues with the upgrade.

Once you have a preview sandbox on the new release:

Run your full regression test suite (automated + critical manual flows).

Verify that customizations, integrations, and major business processes still work.

Detect breaking changes, governor limit changes, behavior changes, etc.

This is one of the most important readiness steps.

✅ D. Review the release notes for automatically-enabled features and technical debt.

The team should carefully review the Salesforce release notes to identify:

Features that will be auto-enabled (they may change behavior in your org).

Changes that might affect deprecated features, old APIs, or technical debt.

New capabilities that may replace custom solutions you’re maintaining unnecessarily.

This helps you proactively plan remediation and enhancements.

❌ Why not the others?

A. Contact Salesforce to schedule a time to upgrade the full Sandbox.

You don’t schedule sandbox upgrades manually.

Sandbox upgrades follow Salesforce’s published upgrade calendar based on whether the sandbox is on a preview or non-preview instance. You manage this by refresh timing, not by calling Salesforce to set a custom upgrade time.

E. Upgrade any SOAP integrations to the newest WSDL as early as possible.

Not required as a generic “release prep” step:

Salesforce supports multiple older API versions for a long time.

You don’t need to upgrade to the newest WSDL every release unless:

You need new features only available in a higher version, or

The version you use is approaching deprecation.

So while keeping integrations modern is good practice, it’s not a key generic step for every release the way B, C, and D are.

| Page 1 out of 23 Pages |

| 1234567 |

Our new timed 2026 Salesforce-Platform-Development-Lifecycle-and-Deployment-Architect practice test mirrors the exact format, number of questions, and time limit of the official exam.

The #1 challenge isn't just knowing the material; it's managing the clock. Our new simulation builds your speed and stamina.

You've studied the concepts. You've learned the material. But are you truly prepared for the pressure of the real Salesforce Certified Platform Development Lifecycle and Deployment Architect exam?

We've launched a brand-new, timed Salesforce-Platform-Development-Lifecycle-and-Deployment-Architect practice exam that perfectly mirrors the official exam:

✅ Same Number of Questions

✅ Same Time Limit

✅ Same Exam Feel

✅ Unique Exam Every Time

This isn't just another Salesforce-Platform-Development-Lifecycle-and-Deployment-Architect practice questions bank. It's your ultimate preparation engine.

Enroll now and gain the unbeatable advantage of: